Edge AI inference products and technology developers have waited for

When developers need AI at the edge, they turn to EdgeCortix for AI inference products fitting their workflow and achieving challenging design goals.

Supported Frameworks & Applications

SAKURA®-II Edge AI Platform

Full-Stack Solution: Integrated Platform Increases Ecosystem Value Over Time

AI Accelerator

Efficient Hardware

Unique Software

Proprietary Architecture

Modules and Cards

powered by the latest SAKURA-II

AI Accelerators

Deployable Systems

EdgeCortix’s SAKURA-II AI Accelerator Brings Low-Power Generative AI to Raspberry Pi 5 and other Arm-Based Platforms

SAKURA-II AI Accelerator

SAKURA-II is an advanced 60 TOPS AI accelerator providing best-in-class efficiency from vision to Generative AI, driven by our low-latency Dynamic Neural Accelerator (DNA). SAKURA-II is designed for applications requiring fast, real-time Batch=1 AI inferencing with excellent performance in a small footprint, low power silicon device.

The DNA core driving SAKURA-II is run-time reconfigurable, enabling multiple deep neural network models to run concurrently while maintaining critical performance characteristics.

Our MERA compiler and software framework provides a robust platform for deploying the latest neural network models in a framework agnostic manner.

Multiple form factor platforms are available for system integration, easy evaluation, and fast time-to-market.

MERA™ Compiler and Software Framework

MERA is a compiler and software framework providing all the necessary tools, APIs, code-generator and runtime needed to deploy a pre-trained deep neural network. MERA offers software developers and data scientists familiar workflows with native support for Hugging Face, TensorFlow Lite, and ONNX. MERA is the companion to the Dynamic Neural Accelerator IP (DNA IP) and provides the entire software stack for developing edge AI inference applications from modeling to deployment.

Dynamic Neural Accelerator Architecture

Dynamic Neural Accelerator (DNA) is a flexible, modular neural accelerator IP core with run-time reconfigurable interconnects between compute units, achieving exceptional parallelism and efficiency through dynamic grouping. Using a patented approach, EdgeCortix reconfigures data paths between DNA engines in real-time to achieve outstanding parallelism and reduce on-chip memory bandwidth (or IP, allowing faster, more efficient hardware execution.). DNA IP works in conjunction with the MERA software stack to optimize computation order and resource allocation in scheduling tasks for neural networks.

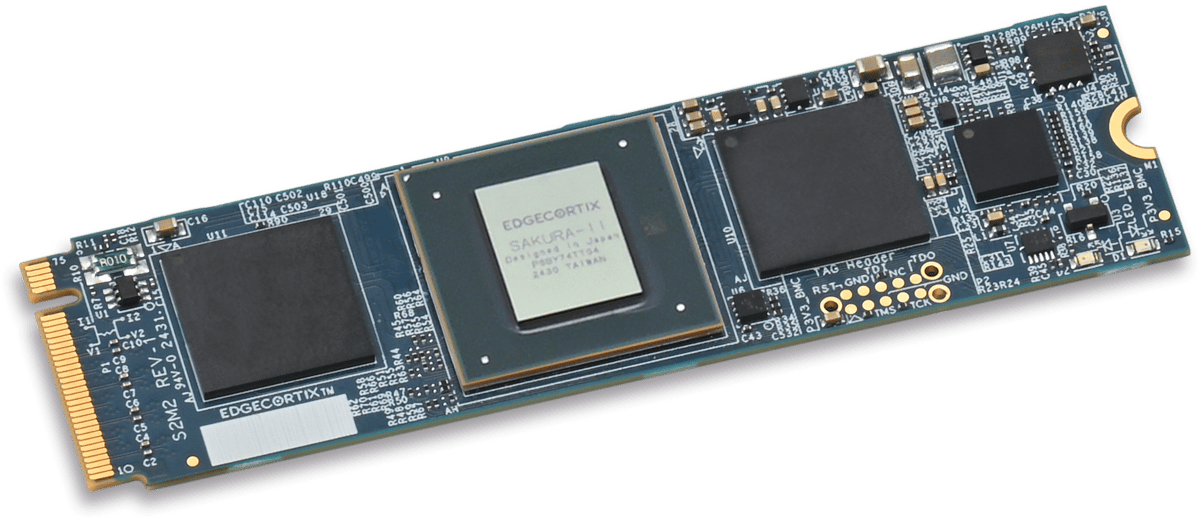

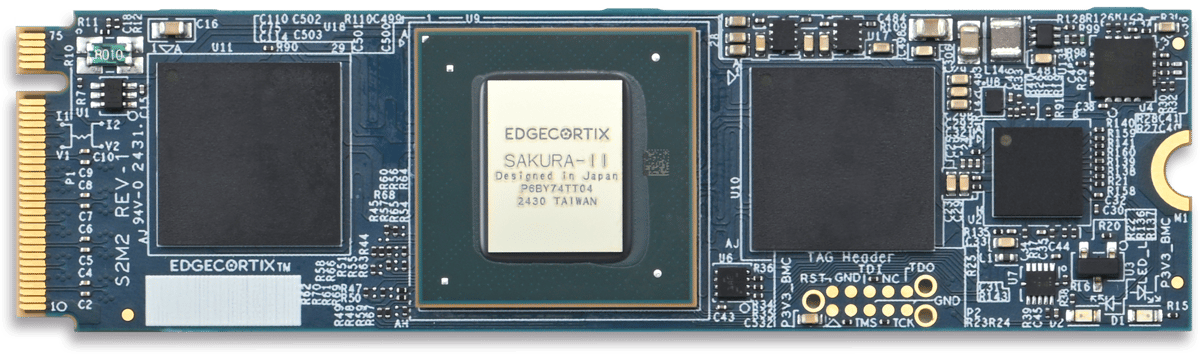

SAKURA-II Modules and Cards

SAKURA-II modules and cards are architected to run the latest vision and Generative AI models with market-leading energy efficiency and low latency.

SAKURA-II M.2 modules are high-performance, 60 TOPS, edge AI accelerators in the small M.2 2280 form factor and are the best choice for space-constrained designs.

SAKURA-II PCIe Cards are high-performance, up to 120 TOPS, edge AI accelerators in the low profile, single slot PCIe form factor. With single and dual options, the best choice will depend on the overall performance needed.

SAKURA-I AI Accelerator

EdgeCortix introduced its first silicon device, the SAKURA-I AI accelerator, in 2022. This device utilized the initial iteration of the low-latency Dynamic Neural Accelerator® (DNA) architecture alongside the early version of the MERA software stack and framework. It was primarily designed for convolutional neural network (CNN) AI workloads. The SAKURA-I platform enabled EdgeCortix to validate the DNA architecture, and with customer feedback, they made significant enhancements, leading to the development of the next-generation DNA-II architecture, which focuses on Generative AI and is now implemented in SAKURA-II.

Many customers utilized SAKURA-I for the initial development of their AI designs, facilitated by its convenient Low Profile PCIe Card format for evaluation. Although SAKURA-I will not be a production-level device, it provided customers with an early advantage in their AI projects, particularly in vision-related applications. Building on the success of SAKURA-I, EdgeCortix enhanced the platform to support transformer neural network (TNN) models, enabling SAKURA-II to efficiently handle Generative AI applications at the edge

EdgeCortix Platform Solves Critical Edge AI Market Challenges

SAKURA-II M.2 Modules and PCIe Cards

EdgeCortix SAKURA-II can be easily integrated into a host system for software development and AI model inference tasks.

Order an M.2 Module or a PCIe Card and get started today!

Given the tectonic shift in information processing at the edge, companies are now seeking near cloud level performance where data curation and AI driven decision making can happen together. Due to this shift, the market opportunity for the EdgeCortix solutions set is massive, driven by the practical business need across multiple sectors which require both low power and cost-efficient intelligent solutions. Given the exponential global growth in both data and devices, I am eager to support EdgeCortix in their endeavor to transform the edge AI market with an industry-leading IP portfolio that can deliver performance with orders of magnitude better energy efficiency and a lower total cost of ownership than existing solutions."

Improving the performance and the energy efficiency of our network infrastructure is a major challenge for the future. Our expectation of EdgeCortix is to be a partner who can provide both the IP and expertise that is needed to tackle these challenges simultaneously."

With the unprecedented growth of AI/Machine learning workloads across industries, the solution we're delivering with leading IP provider EdgeCortix complements BittWare's Intel Agilex FPGA-based product portfolio. Our customers have been searching for this level of AI inferencing solution to increase performance while lowering risk and cost across a multitude of business needs both today and in the future."

EdgeCortix is in a truly unique market position. Beyond simply taking advantage of the massive need and growth opportunity in leveraging AI across many business key sectors, it’s the business strategy with respect to how they develop their solutions for their go-to-market that will be the great differentiator. In my experience, most technology companies focus very myopically, on delivering great code or perhaps semiconductor design. EdgeCortix’s secret sauce is in how they’ve co-developed their IP, applying equal importance to both the software IP and the chip design, creating a symbiotic software-centric hardware ecosystem, this sets EdgeCortix apart in the marketplace.”

We recognized immediately the value of adding the MERA compiler and associated tool set to the RZ/V MPU series, as we expect many of our customers to implement application software including AI technology. As we drive innovation to meet our customer's needs, we are collaborating with EdgeCortix to rapidly provide our customers with robust, high-performance and flexible AI-inference solutions. The EdgeCortix team has been terrific, and we are excited by the future opportunities and possibilities for this ongoing relationship."