About EdgeCortix

Innovation Focusing on AI Efficiency at the Edge

EdgeCortix Mission

To deliver near cloud-level performance at the edge, with orders of magnitude better energy efficiency and processing speed, drastically reducing operating costs.

The EdgeCortix Story

Dr. Sakyasingha Dasgupta, our CEO and Founder, is a seasoned technologist who has been at the forefront of artificial intelligence systems for the past two decades. His experience spans public research institutes such as The Max Planck Society and RIKEN, as well as leading corporations like Microsoft and IBM Research. This journey led to the founding of EdgeCortix, a company committed to optimizing AI processing at the edge while minimizing power consumption. Sakya observed that many powerful AI solutions were ineffective in edge scenarios due to excessive power consumption. Unlike most AI companies that traditionally prioritize silicon devices and adapt software afterward, Sakya believed there had to be a better approach.

In July 2019, Sakya established EdgeCortix as a fabless semiconductor company headquartered in Tokyo. Seeing the possibilities for growth in AI, the company has garnered funding from a marquee group of investors including Japan’s foremost venture capital firm SBI Investment Co. Ltd, as well as Global Hands-On VC (GHOVC), a leading Japan-US collaboration-focused VC. EdgeCortix has also received significant investment from Renesas Electronics Corporation, a long-term customer and a premier global supplier of semiconductor solutions.

With the focus on developing specialized AI accelerators for edge computing using a software-driven approach, over the past five years (and 20+ patents granted or applied for), our engineering teams in Japan developed the MERA Compiler and Software framework, along with our Dynamic Neural Accelerator (DNA) – a novel runtime-reconfigurable processor architecture. We validated this architecture and designed the initial SAKURA silicon solution based on our patented DNA architecture.

Recently, we unveiled SAKURA-II, our next-generation production silicon. SAKURA-II excels in flexibility, power efficiency, and real-time processing of complex models including Generative AI at the edge. With industry-leading memory capacity and bandwidth, SAKURA-II uses the advanced DNA architecture to seamlessly handle vision tasks and Large Language Models (LLMs) in low-power environments.

With a global operational footprint, including Japan, India, Singapore, and the United States, we are very proud of our journey and our team’s accomplishments so far, and we recognize that this is just the beginning. We invite you to explore our website and encourage you to reach out and learn how EdgeCortix can address your unique edge inference needs.

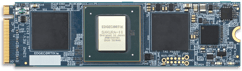

SAKURA-II AI Accelerator Platform

SAKURA-II is an advanced 60 TOPS AI accelerator providing best-in-class efficiency from vision to Generative AI, driven by our low-latency Dynamic Neural Accelerator (DNA). SAKURA-II is designed for applications requiring fast, real-time Batch=1 AI inferencing with excellent performance in a small footprint, low-power silicon device.

The DNA core driving SAKURA-II is run-time reconfigurable, enabling multiple deep neural network models to run concurrently while maintaining critical performance characteristics.

Our MERA compiler and software framework provides a robust platform for deploying the latest neural network models in a framework-agnostic manner.

Multiple form factor platforms are available for system integration and fast time-to-market.

AI Accelerator

Efficient Hardware

Proprietary Architecture

Unique Software

Modules and Cards

powered by the latest SAKURA-II

AI Accelerators

Deployable Systems

EdgeCortix Business Summary

Maximizing Business Value with Energy Efficient Generative Edge AI Solutions

EdgeCortix Platform Solves Critical Edge AI Market Challenges

EdgeCortix commanding a lead in efficient edge AI hardware and software, as seen on:

Given the tectonic shift in information processing at the edge, companies are now seeking near cloud level performance where data curation and AI driven decision making can happen together. Due to this shift, the market opportunity for the EdgeCortix solutions set is massive, driven by the practical business need across multiple sectors which require both low power and cost-efficient intelligent solutions. Given the exponential global growth in both data and devices, I am eager to support EdgeCortix in their endeavor to transform the edge AI market with an industry-leading IP portfolio that can deliver performance with orders of magnitude better energy efficiency and a lower total cost of ownership than existing solutions."

Improving the performance and the energy efficiency of our network infrastructure is a major challenge for the future. Our expectation of EdgeCortix is to be a partner who can provide both the IP and expertise that is needed to tackle these challenges simultaneously."

With the unprecedented growth of AI/Machine learning workloads across industries, the solution we're delivering with leading IP provider EdgeCortix complements BittWare's Intel Agilex FPGA-based product portfolio. Our customers have been searching for this level of AI inferencing solution to increase performance while lowering risk and cost across a multitude of business needs both today and in the future."

EdgeCortix is in a truly unique market position. Beyond simply taking advantage of the massive need and growth opportunity in leveraging AI across many business key sectors, it’s the business strategy with respect to how they develop their solutions for their go-to-market that will be the great differentiator. In my experience, most technology companies focus very myopically, on delivering great code or perhaps semiconductor design. EdgeCortix’s secret sauce is in how they’ve co-developed their IP, applying equal importance to both the software IP and the chip design, creating a symbiotic software-centric hardware ecosystem, this sets EdgeCortix apart in the marketplace.”

We recognized immediately the value of adding the MERA compiler and associated tool set to the RZ/V MPU series, as we expect many of our customers to implement application software including AI technology. As we drive innovation to meet our customer's needs, we are collaborating with EdgeCortix to rapidly provide our customers with robust, high-performance and flexible AI-inference solutions. The EdgeCortix team has been terrific, and we are excited by the future opportunities and possibilities for this ongoing relationship."